|

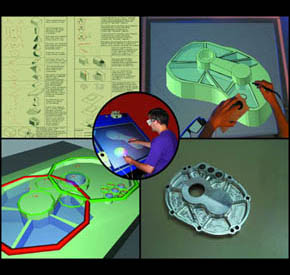

Telecollaboration is unique among the Center's reseach areas and driving applications in that it truly is a single multi-site, multi-disciplinary project. The goal has been to develop a distributed collaborative design and prototyping environment in which researchers at geographically distributed sites can work together in real time on common projects.

The Center's telecollaboration research embraces both desktop and immersive VR environments in a multi-faceted approach that includes:

Our current vision is ambitious and long-term with a pragmatic strategy that includes projects designed to produce short-term results which provide building blocks for the attainment of more far-reaching goals.

In the long-term, we want an environment that permits us to:

- Feel as if our real individual environments are physically joined along

some common junctions, such as shared walls, or as if we are in the same

virtual shared space as our collaborators.

- See and interact with collaborators as naturally as we do when we are

in the same physical room - gesturing, pointing, using all the subtle

nuances of both verbal and nonverbal communication.

- Create (design), manipulate, and interact, in 3D, with shared objects, both real and synthetic.

In the short-term, we propose:

- To use various displays with a wide spectrum of immersiveness:

- Non-immersive desktop display.

- Semi-immersive "fish tank" display.

- Semi-immersive Active Desk*.

- Immersive FakeSpace BOOM.

- Fully-immersive head-mounted-display (HMD).

- To create a gestural interface to a full-featured design modeling system, using it to build a new high-resolution, wide field-of-view video camera.

- To design and implement a structured-light depth extraction scheme for limited real-time remote scene reconstruction that will allow remote display both of 3D avatars and their physical context.

To provide an integrated context that conveys how the sub-projects fit together in time, space, and objectives, we have created a

narrative description.

In addition to providing a sequential description, this narrative also provides extensives links to the different projects as well as to related areas within the Center.

* A Responsive Workbench variant that has a drafting table with a computer screen on the top face where user interaction is done directly on the screen.

Telecollaboration Bibliography

|

Shared Virtual Environment

Shared Virtual Environment  Wide FOV High-Resolution Camera Cluster

Wide FOV High-Resolution Camera Cluster  HiBall Tracker

HiBall Tracker