Edge-based Blur Kernel Estimation Using Patch Priors

Libin Sun1

Sunghyun Cho2

Jue Wang2

James Hays1

1Brown University 2Adobe Research

Abstract

Blind image deconvolution, i.e., estimating a blur kernel k and a latent image x from an input blurred image y, is a severely ill-posed problem. In this paper we introduce a new patch-based strategy for kernel estimation in blind deconvolution. Our approach estimates a "trusted" subset of x by imposing a patch prior specifically tailored towards modeling the appearance of image edge and corner primitives. To choose proper patch priors we examine both statistical priors learned from a natural image dataset and a simple patch prior from synthetic structures. Based on the patch priors, we iteratively recover the partial latent image x and the blur kernel k. A comprehensive evaluation shows that our approach achieves state-of-the-art results for uniformly blurred images.

Paper

patchdeblur_iccp2013.pdf, 11MBSupplementary Materials

1. Mathematical Derivations2. Additional Results

Slides

SUN_patchdeblur_ICCP2013.zip

Citation

Libin Sun, Sunghyun Cho, Jue Wang, James Hays. Edge-based Blur Kernel Estimation Using Patch Priors.

Proceedings of the IEEE International Conference on Computational Photography (ICCP), 2013.

Bibtex

@inproceedings{patchdeblur_iccp2013,

author = {Libin Sun and Sunghyun Cho and Jue Wang and James Hays},

title = {Edge-based Blur Kernel Estimation Using Patch Priors},

booktitle = {Proc. IEEE International Conference on Computational Photography},

year = {2013}}

MatLab Code

Available upon request, please contact Libin Sun (lbsun at cs.brown.edu).Test Set

our synthetic test set (blurred + 1% noise) [80 images x 8 kernels = 640 images, 240MB]Full Results

all results [80 images x 8 kernels x 7 methods, with (cropped) ground truth images and kernels, 1.3GB]All evaluations are done using the center portion of the images, discarding 50 pixels from each border. PSNR, SSIM and error ratios are computed based on best alignment. Note: few of the methods failed to produce output on some of the test images due to their code crashing, hence less than 640 output images are included for these methods.

reference images [80 images x 8 kernels = 640 images, 243MB]Deblurred using groundtruth kernels and Zoran's EPLL-GMM [ICCV 2011] for the final non-blind deconvolution step. This is considered the performance upper bound when computing error ratios.

Sample Results

Quantitative Evaluation

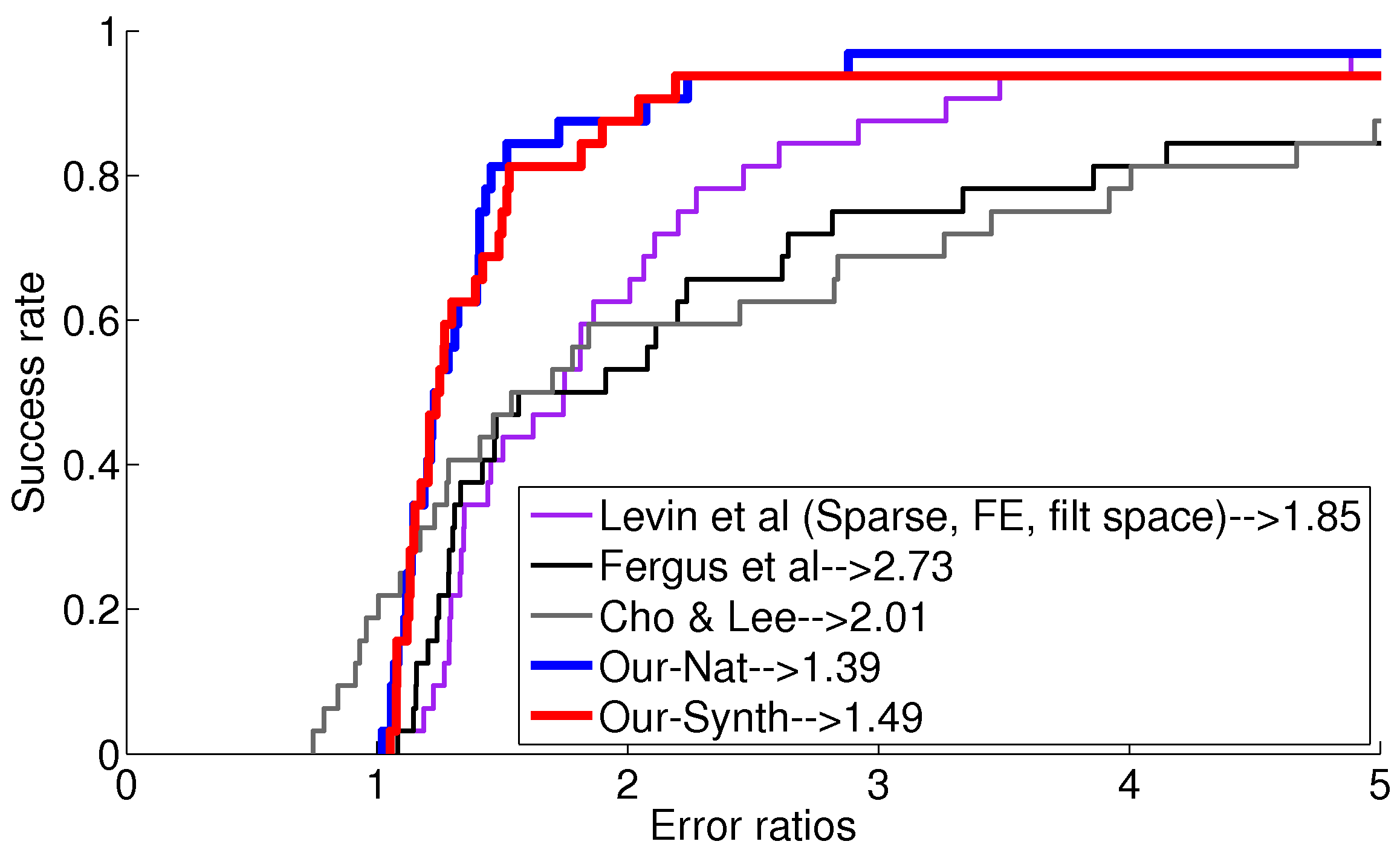

Results on test set (32 images) from Levin et al 2011 |

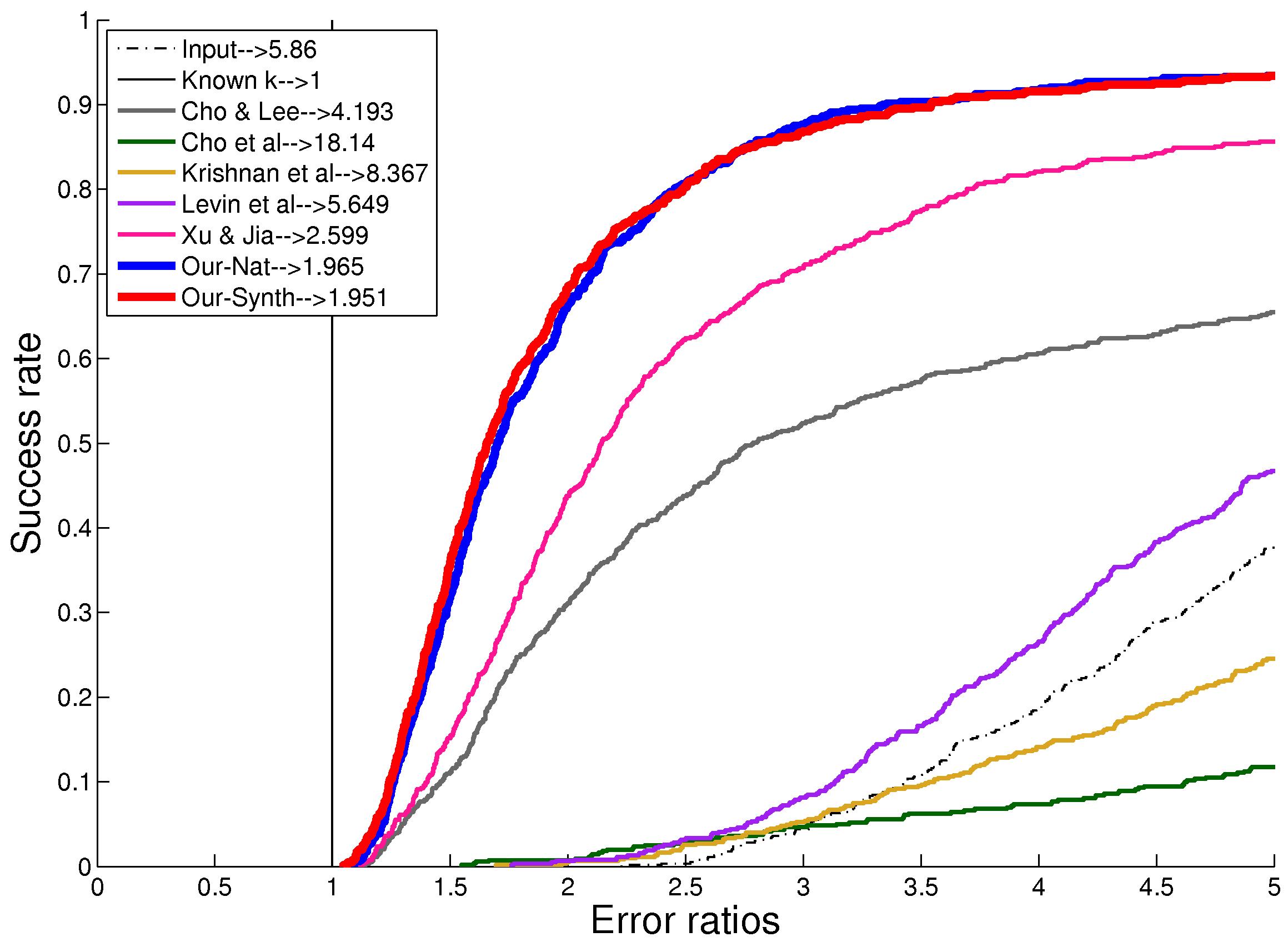

Results on our test set (640 images) |