|

My alignment algorithm works by using a circular shift method (provided) that applies a given pixel offset to a color channel, then computes a "sum of squared differences" on the color intensities in the compared color channels (in the interior 50% of the photo, to avoid the counting the border). We want to choose an offset that minimizes this sum. It is important to note that the SSD on color intensity is not a perfect metric for this task. In fact, we can imagine a perfectly aligned image where the underlying intensities are very different (e.g. if the composed color is just "pure" red, green, or blue). To get around this, my implementation uses SSD on a combination of colors and detected edges (when cheap to compute); this is described more below.

The inputs to my alignment algorithm are the three equal-sized color channels (evenly divided from the source image). My process is to align channel green to blue, then align channel red to blue. Transitively, red and green will also be aligned. For each of these steps, I recursively divide the images in half (in each dimension) until they are sufficiently small (in my case, <= 400 pixels in the large dimension (enough to do the smallest sample images without recursion)), so that I can search locally a range (-15,15) pixels in each dimension. This search space is small enough to be fast and large enough to optimally align most offset channels.

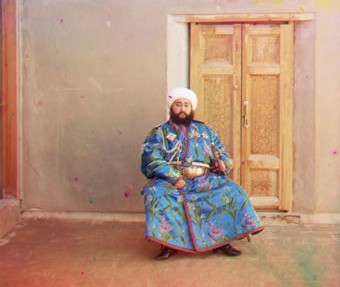

In the base case, my SSD is computed not on color intensities, but instead on the edges detected in the image. I apply a Sobel filter to both compared channels to find edges (gradient magnitude). In some photos, this got me around the SSD color problem above (e.g. photo of man in the blue robe, sitting) because edges are almost always present at some intensity in each channel of a photo.

When the base estimate is returned to a higher level, that estimate (multiplied by 2) is used as a starting point for local search. At these higher levels, I no longer compute SSD on the edges, both because it is expensive to compute on larger images, and because SSD on color seems to work well as long as the search window is relatively small. At these levels, I also narrow the search window to (-5,5) in each dimension. In theory, this smaller space should be enough to adjust the alignment at this higher (doubled) precision/resolution, and this worked well in my results.

Most aligned images will still have problems at the border due to the way the channels were originally spliced into one image. My cropping works as follows:

I also experimented with cropping by checking scan-lines across edges, but inconsistencies in the borders caused problems. In my implementation, the worst case scenario (when non-trivial parts of the image are interpreted as border) results in a 20% crop in each dimension.

Automatically adjusting the white-balance is done by finding the brightest pixel that is close to white, and assuming it is white in the real scene. To make this pixel in the composite image white, I divide each channel (R,G,B) by its color value at this pixel. This is an approximation (and could result in producing a > 1.0 value, which Matlab will clip, at some pixels) but it seemed to work well (as seen in the man sitting outside, which had an orange tint before balancing).

A final task was to increase color contrast. I achieve this by stretching the range of color values in each channel across all of (0.0,1.0). For each channel, I find the min value, subtract that from the entire matrix (ensuring that the lowest color is now 0.0 intensity), and then divide by the new max value (ensuring that the brightest color is now 1.0 intensity). This could be improved by considering perceptual contrast in the composite image instead of individually in the channels, but the simple approach gave decent results on my data set.

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|