CS 195-G: Final Project

Virtual Relighting

Virtual relighting allows the illumination of a scene to be adjusted after it has been recorded. This mainly applies to the film industry, where an actor's performance can be recorded once and placed into any virtual environment with the correct illumination. Paul Debevec, who has done a lot of work in this area (see Virtual Cinematography: Relighting through Computation), performed virtual relighting by building a "light stage" to record an actor's performance. A light stage is a dome of lights and cameras that very quickly capture many different lighting directions:

Using a light stage and virtual relighting to capture walking

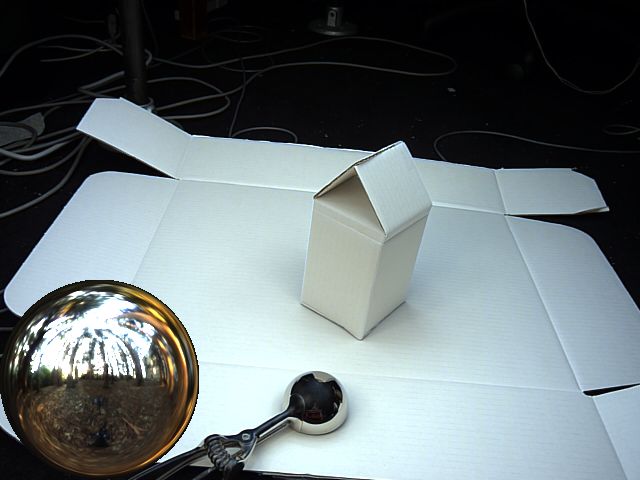

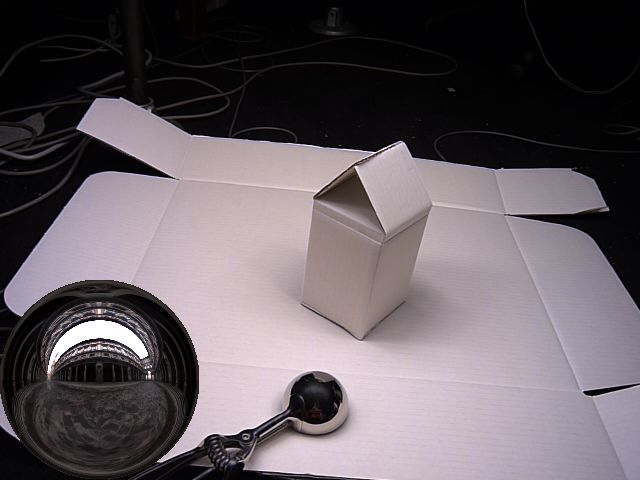

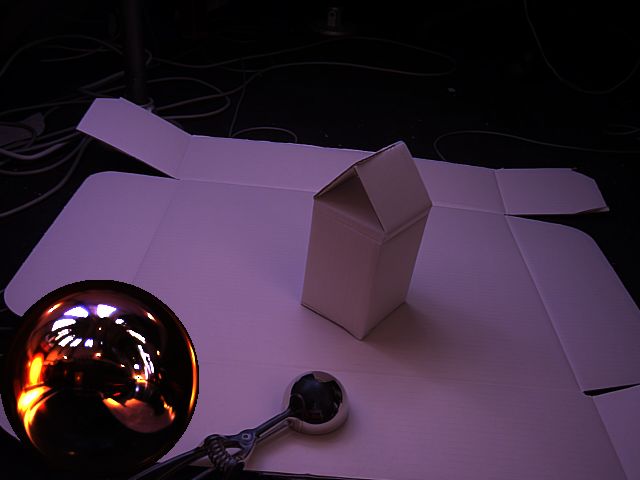

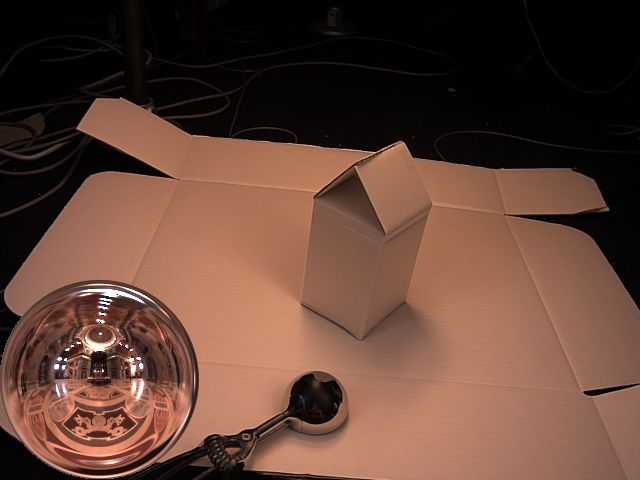

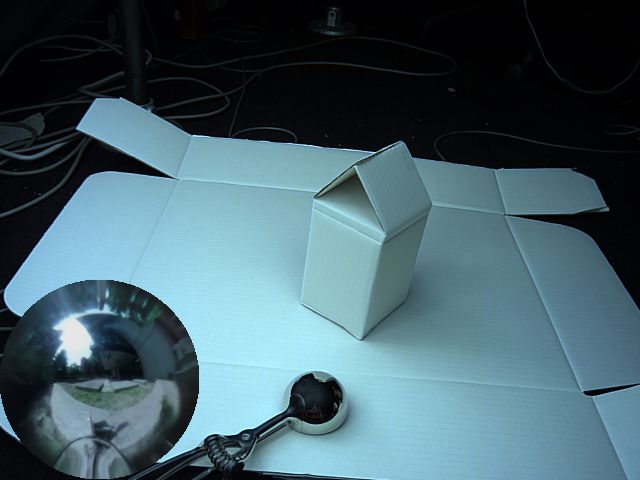

I emulated an earlier version of Debevec's light stage, shown below. It is meant for static scenes, and slowly scans a single light around the scene, recording images as it scans.

Using a light stage for static capture

The first step was to record a video of the light source being swept around the object. This has the effect of sampling the incoming light from all possible directions. Since light is additive, the simulation of multiple lights is accomplished using a weighted average of individual samples. To reconstruct the position of the light from the video, I used a mirrored hemisphere (ok, it's an ice cream scoop) with a user-specified position and mask. Samples that were too close to other samples were ignored so holding the light in one place for a bit didn't bias the sample set.

The recovered light path |

The indicated sphere location |

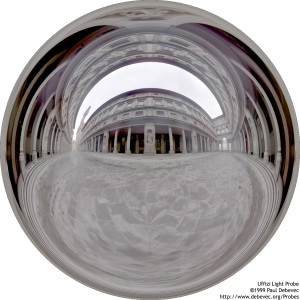

This allows novel lights to be applied simply by interpolating between the nearest samples and multiplying by the new light color. Light is additive however, so if you want to simulate any set of light sources all you have to do is simulate them individually and sum them up. Sets of light sources are often stored as spherical angular maps like the ones below. Each image actually records all incoming light at a certain point in space (360 by 180 degrees).

|

|

Here are some relighting results using some of Paul Debevec's light probes, which are shown in the bottom left corner of each image. Notice how there are shadows in the scene opposite strong light sources in the light probe. You can also faintly see the image of the lighting environment in the reflection of the mirrored sphere.

Eucalyptus Grove, UC Berkeley |

The Uffizi Gallery, Florence |

Grace Cathedral, San Francisco |

St. Peter's Basilica, Rome |

To create an animation I used the Sky Probe Dataset from USC, which has one light probe of the sky recorded every 10 minutes for an entire day. I ran into problems recording videos in my room because the light reflecting off the white walls was washing out the results, so I had to record the videos in a dark room with black walls.

The virtual relighting of a piece of paper. The lighting environment used is shown

in the lower left, and the flickering in the beginning is due to the cloud cover.

The number of samples required can get pretty large, which slows down the synthesis of video a lot because each sample contributes every frame. To help speed this up and reduce the number of operations required per frame, I tried using Polynomial Texture Maps to compress the data. PTMs are just a per-pixel quadratic regression of the form:

pi(u, v) = aiu2 + biuv + civ2 + diu + eiv + fi

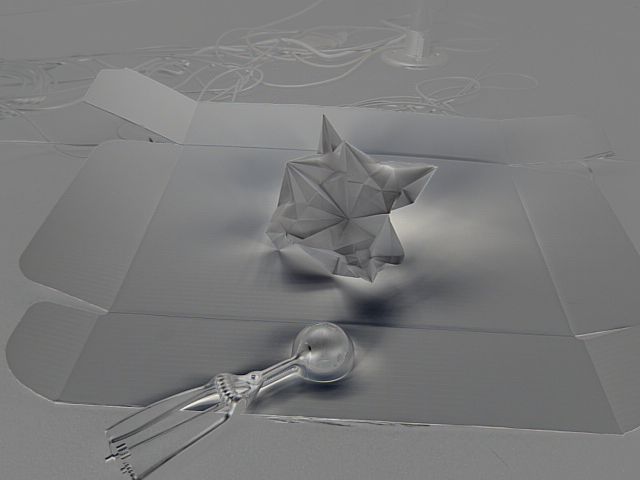

Here u and v are the two parameters that define the incoming light direction (for example, the azimuth and inclination angles from spherical coordinates), and p is the pixel color (at index i in the image). The six values a through f are the per-pixel polynomial coefficients, visualized below. Each coefficient has been scaled to visible range such that gray is zero, darker values are negative, and lighter values are positive.

a |

b |

c |

d |

e |

f |

PTMs have the effect of blurring shadows and specular highlights, but can be displayed in real-time because each pixel requires only six multiplications and five additions. To accommodate multiple lights per frame, the polynomial is adjusted based on samples of the lighting environment. Below us and vs are the parameters that define the light direction of the sample s, and ws is the color of that sample.

pi = ai∑wsus2 + bi∑wsusvs + ci∑wsvs2 + di∑wsus + ei∑wsvs + fi∑ws

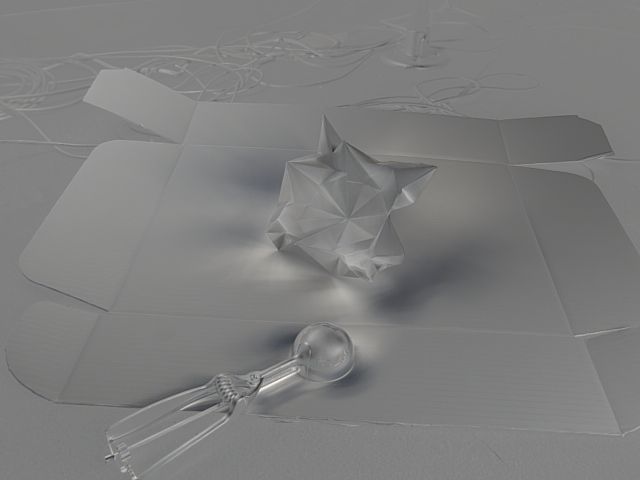

This method maintains real-time performance because each sum is computed once per frame and the number of samples is relatively small. The sample locations can be chosen intelligently using median cut (see A Median Cut Algorithm for Light Probe Sampling), although I just used my original sample points. Below is the same scene relit using PTMs—note the increased softness of the shadows:

The same scene from the previous video using PTMs

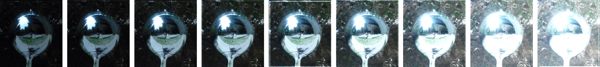

I also attempted to make my own light probe. The easiest way to construct a light probe is to take a photograph of a mirrored sphere, which actually captures all light coming towards the center of that sphere (this is how the previously used light probes were constructed). I used nine exposures to reconstruct a high dynamic range image using HDR Shop. I also used a second image of the mirrored sphere to remove the camera and myself from the image:

My light probe (outside Grad Center)

The first set of exposures |

The second set of exposures |

My light probe used for virtual relighting