This project investigates an application of image morphing. This application is referred to in previous work as Visual Speech Synthesis (Ezzat and Poggio 2000). This is simply the creation of a video to accompany spoken text by image morphing between photographs of a person pronouncing the appropriate phonemes. These images are referred to as visemes.

Visual Speech Synthesis in full involves first running speech recognition software and inferring the constituent phonemes and their onset timings. As this is not in the realm of computer graphics and presents a considerable challenge to code, this portion is not addressed in this project. Instead, sound clips were manually annotated by observation of the waveform. The phonemes were extracted from the written version of the audio clip using RiTa, a Processing library designed for digital art and literature which contains a simple text to phoneme component. Furthermore, the visemes themselves must be aligned as best is possible. While this could easily be done automatically, I did it by hand using the GIMP.

I did perform a deep investigation of the open source speech recognizer SPHINX (Version 4) from CMU. While I was able to recover word onset timings and word pronunciation results (phonemes which could be mapped many to one onto visemes), individual phoneme timings are not easily accessible. I believe significant understanding of the decoding HMM code is necessary to access this information.

Given the tuples of viseme and onset timing, all that is required to compose a Visual Speech Synthesis video are correspondence points across all of the images to input to the image morphing code from Project 5. Whereas this in principle involves manually tagging very few images, the problem it presents is interesting. The specific formulation employed here involves a data set of 18 visemes in which one of them is manually tagged with interest points. The task is to infer best matches in the other 17 images for each of these interest points.

I carried out some experiments using automatic point detection with the harris point detector from Project 6, but using adaptive non-maximal suppression leads to undesirable results. This is not only due to the choice of interest points on the wall next to the subject but also due to the requirement of our application that we carefully model the mouth in order to generate smooth transitions. Adaptive non-maximal suppression does not allow for this variability in point density. If this process were to be carried out automatically, a high level mouth detector would have to be constructed to inform the software of the region in which higher point density is desired. This is furthermore only one component of a toolbox which would be required to perform robust point selection; for example some code to segment the face and the background would be necessary. For these reasons, in this project I investigate the problem as stated above, by finding correspondences for a set of manually annotated points.

The images used in this project were captured based on the following set of phonemes defined for speech animation of 3D models

The actual viseme images can be seen here

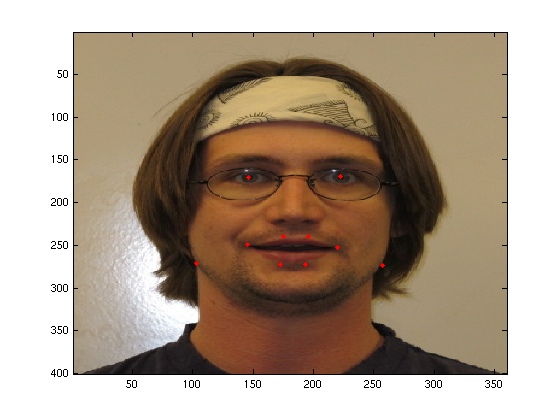

The manually tagged input image (on the AA viseme) uses the following interest points.

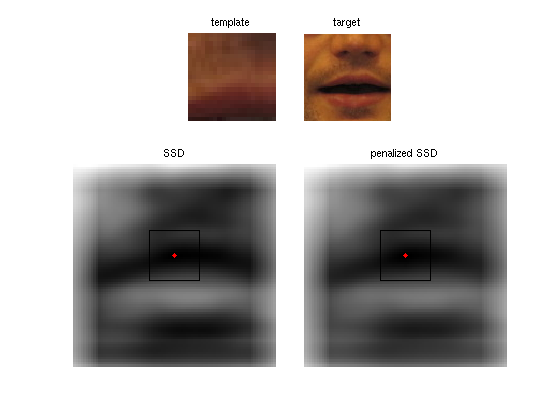

The problem as stated above is a template matching problem. I employed two template matching heuristics, cross-correlation and SSD calculated through convolution as in Project 4. In the description below the template is the signature which we are searching for in the target image. I will refer to the first image in which the points are manually labeled as the prime image.

This template matching method involves rotating the template by 180 degrees and then convoluting it with the target image. The template used was a square window of size 21 in the prime image, centered around an interest point. The target image in a viseme which is not the prime image is a square window of size 81 centered at the location of the interest point in the prime image. This choice is motivated by the assumption that the images are prealigned and that the deformation of the face betwee visemes is not very large.

I experimented with cross-correlation on each color channel individually, summing the resulting correlation maps over the RGB channels, and also by converting the image to greyscale. Additionally I tried converting the target and mask to edge images using the canny edge detector provided in Matlab. The best results obtained with this method were using the greyscale image with edge detection images. A sample template, target, and correlation map is shown below.

This particular match shown returns a good result. However, performance is not satisfactory overall. Only considering the important interest points along the mouth edge, from inspection very few interest points were correctly found using this method.

I also tried including a distance penalty by subtracting a penalty image calculated as the distance from the center of the target window (the location of the prime image's interest point in the target image). This constrains the deformation of the resulting morph grid, but in principle requires parameter tuning and does not improve results by a large amount; the resulting points are often still wrong but just closer to the original location.

The second technique I tried was using SSD, which performed much better. Performance was additionally improved through the use of a distance penalty as described above, although in this case the penalty is added instead of subtracted. Minor parameter tuning was required to scale the strength of the distance penalty; scaling by 1/10 gave very good results (see link below). The implementation employed was the one from the scene completion assignment in which the SSD of a template is calculated against all offsets in the target image using two convolutions. For the best results (sampled below) I first converted the template and target to greyscale, and used imfilter to perform the convolution. As previously mentioned, this worked quite well, most likely due to the manual alignment of the images.

The morph points recovered in this manner can be seen here here.