Dense Realtime Optical Flow on the GPU

Paul Sastrasinh (psastras)

CSCI1290 Final Project

For this project, I implemented realtime dense Lucas-Kanade pyramidal iterative optical flow and feature detection on the GPU using OpenGL and GLSL.

Optical Flow

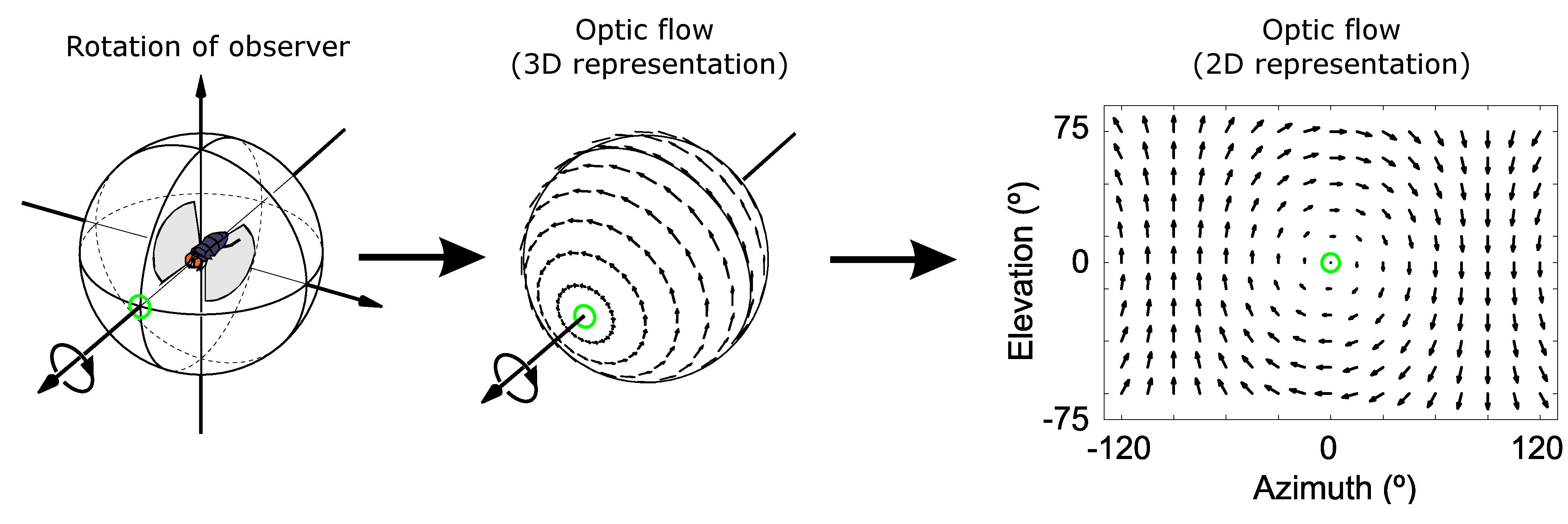

Optical flow algorithms aim to estimate the motion of pixels in a sequence of images as they travel from frame to frame. The full optical flow equation is not solvable, since it is one equations with two unkowns (of x and y velocity). Instead, all optical flow algorithms introduce another constraint equation to make the problem solvable. Below is Wikipedia's silly diagram (it just looks nice):

Optical flow has several uses, including video encoding, video retiming, and vido stabilization. Optical flow has also been used to track objects between video frames.

The Lucas-Kanade algorithm [Bruce D. Lucas, Takeo Kanade, 1981] for determining optic flow assumes an affine model where the displacement of pixels between frames is relatively small and constant within a local neighborhood.

In Tomasi and Kanade's publication, they extend the original work of Lucas and Kanade to include a method for determing and tracking good features. The results of both these works and further improvement to the LK algorithm is summed up nicely in Intel's 2001 paper , which is the primary reference for this project.

Most optical flow implementations first run a dense optical flow to determine good features to track according to Tomasi and Kanade. They then track this sparse set of features from frame to frame. Constantly computing a dense optical flow each frame is computationally expensive (at each pixel, multiple least squares problems must be solved). However, having a dense vector flow field may be desirable in some applications.

Algorithm

The iterative pyramid LK algorithm consists of several steps.

- Build an image pyramid of the two image frames.

- Set the initial flow vector guess for each pixel to (0,0)

- For each level of the pyramid, calculate the x and y derivatives of the first image.

- For each level of the pyramid, starting at the smallest level we compute the optical flow for that level of the pyramid:

- For a certain number of itertations, solve the LK least squares for a given window, and set the new guess as the solution. Repeat iteration until threshold or maximum iterations has been reached.

- Propogate velocities from the lower level to the next level and go back to four until the maximum level of the pyramid has been reached.

For the full equations, read Intel's paper: here.

Pyramidal optical flow allows regions without many edges to have the correct flow estimates (otherwise the algorithm would estimate these regions to be zero even though the whole region may be moving).

Implementation

The initial implementation was done in C++, using OpenCV to read video frames from a file or camera. Even after extensive optimizations (loop reordering, vectorization, etc.), the implementation was unable to run in real time, mainly because the algorithm complexity of pyramidal LK is .

Most computer vision algorithms operating on a per pixel basis are well suited for GPU parallelization, since GPU kernel programs can be quickly and easily applied to all the pixels at the same time.

For this project I decided to use GLSL (as opposed to a GPGPU language such as CUDA), since GLSL can be run on almost any GPU and because the nature of the problem can be easily integrated to the traditional graphics pipeline (there is no need to use a general purpose language, when we're processing images).

Pipeline

OpenCV is used to read video files or capture video from an attached camera. The program requires GLEW to compile and run (since OpenGL on Windows is a complete mess).

Most of the operations implemented on the GPU rely on the ability to render full screen quad to the drawing surface and applying a pixel shader during this draw pass. By rendering a full screen quad with a texture on it, it ensures that the shader (essentially a kernel function), is run once for each pixel on the texture.

As images are captured from the device, image pyramids are created, and the x and y derivatives are computed on the CPU. I experimented with doing this on the GPU (code is written, and GPU image pyramids can be enabled), but the current version does this solely on the CPU. Once each pyramid level has been generated, they are uploaded to the GPU as single channel 32 bit floating point textures. These textures are then fed into the optical flow computation shader which runs the LK algorithm for each texel. It outputs an RGBA32F texture, where the red and green channels store the x and y velocities, and the blue channel stores the compouted eigenvalues (used for determining which features to track). The alpha channel is left unused.

An initial implementation resulted in frame rates only slightly faster than the CPU implementation. The apparent cause of the slowness is due to the compiler being unable to optimize certain loops and other constructs due to too many unknown uniform variable values. To fix this, after loading a video or pulling a frame from an attached camera, optimized shaders are constructed and compiled on the fly during runtime based on the template optical flow shader.

Optical Flow Method

Main Optical Flow Shader File

Results

30fps is easily achievable using higher settings in the GPU implmentation, even at higher resolutions and more iterations. Here are some captured frames from some video sequences.