Project 4: Stereo Pinhole Camera

Due Date: 11:59pm on Wednesday, March 16th, 2011

Brief

- This handout: /course/cs129/asgn/proj4/handout/

- Stencil code: /course/cs129/asgn/proj4/stencil/

- Data: /course/cs129/asgn/proj4/data/

- Handin: cs129_handin proj4

- Required files: README, code/, html/, html/index.html

Requirements

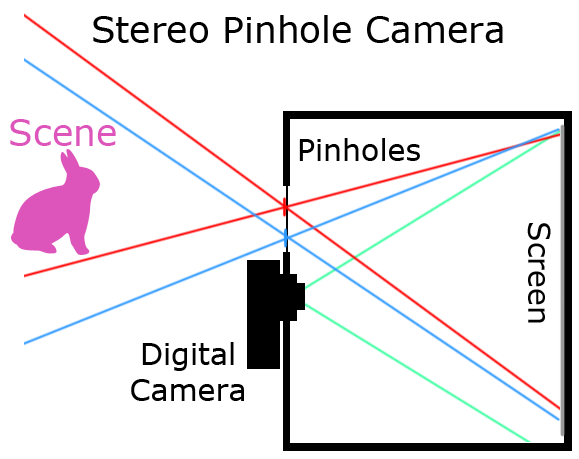

You will build a physical stereo camera from a box, red and cyan color filters, and a digital camera and create rough 3d reconstructions from your stereo images. You can construct your stereo pinhole camera and acquire images in groups of up to 4 (make sure someone has a decent camera!). Individually, you will warp the acquired stereo images in to Standard Rectified Geometry, determine disparity, compute depth, and create rough 3d reconstructions.Details

MATLAB stencil code is available in /course/cs129/asgn/proj4/stencil/. You're free to do this

project in whatever language you want, but the TAs are only offering support in MATLAB. The stencil code

for this project provides code to manually specify stereo correspondences, to do coarse 3d reconstruction from a depth map,

and to save animated .gif stereo pairs. By default the stencil code demonstrates correspondence specification and

3d reconstruction on two example scenes.

The overall pipeline for this project is as follows:

- Build a stereo pinhole camera

- Acquire anaglyph photographs of scenes

- Separate anaglyph color channels into a stereo image pair (this is trivial)

- Warp stereo pair in to Standard Rectified Geometry based on the geometry of your camera

- Manually specify correspondences between stereo pair (code provided)

- Convert sparse disparities into dense disparity map (code provided)

- Convert disparity to depth, based on the geometry of your camera

- Create a rough 3d reconstruction of scene (code provided)

Constructing a Stereo Pinhole Camera

You can build your camera in groups of up to 4. Make sure that at least one person has access to a decent digital camera with manual exposure and focus controls. Compact cameras have the advantage that they are easier to mount to your box without blocking the pinhole's line-of-sight, and digital SLRs have the advantage that they are more sensitive. Resolution isn't likely to be an issue -- your pinhole camera will be blurry.A camera obscura is (literally) a "dark chamber" with a small hole on one side and a screen on the opposite side for light to project on to. If the hole is small (perhaps a few millimeters) then the projected image will be fairly sharp, although quite dim. It is hard to see such an image with the naked eye, but with a long exposure digital photograph (perhaps 5 to 30 seconds) the image becomes clear.

To build your pinhole camera, I suggest the following steps (although feel free to improvise):

To build your pinhole camera, I suggest the following steps (although feel free to improvise):

1. Find a cardboard box. I used a shoebox, although a larger box would be fine. Make sure the walls of the box don't allow light through. Ideally the box would be dark on the inside to minimize interreflections. If the box is light colored you might try covering the interior with dark paper. Also, make sure light doesn't leak through the corners -- apply duct tape liberally until the box is "light tight".

2. Decide which face of the box you want to put your pinhole(s) in. This will determine which face of the box the image is formed on (the "screen"). These should probably be the wider faces of your box so that your photos can capture a wide field of view. Make sure that the distance between the pinhole face and the screen face -- the focal length of your pinhole camera -- is not less than the minimum focus distance of your camera, or you will be unable to capture a sharp photo of the screen.

3. Create the actual pinhole(s). Putting the holes directly in the cardboard might be a bad idea because 1) corrugated cardboard is too think and small diameter pinhole won't permit a wide enough field-of-view and 2) you might want to adjust the size and spacing of the pinholes. I recommend cutting a larger hole in the cardboard box and attaching a thinner sheet of card stock, and having holes in the card stock. The smaller the holes, the sharper your image (you're unlikely to make a hole small enough for diffraction effects to cause issues), but you'll need longer exposure times to compensate for smaller pinholes.

4. Cover the inside wall facing the pinhole with white copy paper.

5. Create a hole for the digital camera. The size of the hole will depend on the size of the camera lens. It needs to be tight fitting so light doesn't get in. Make sure the camera doesn't block the field of view of the pinholes too much. You may try mounting the camera at an angle if it can't get the entire screen in its field of view otherwise.

You'll want to debug the system with an unfiltered pinhole before trying the red / cyan filtered pinholes. It's probably easiest if your digital camera and filtered pinholes are collinear. Determining the best spacing between the filtered pinholes (i.e. the stereo baseline) is tricky. Small baselines make it easier for a human to decipher the resulting anaglyph images and they make it easier to have the fields-of-view from the two pinholes overlap in a single image. But if the baseline is small, the disparity for far away objects will also be very small, so you will only get accurate depth estimates for nearby scene elements.

In order to do accurate depth reconstructions you will need accurate measurements of the baseline (distance between filtered pinholes), the focal length (distance from pinholes to screen), and the width of a pixel on the screen.

Acquiring Anaglyph Photographs

An anaglyph image fuses a pair of stereo images into a single image by separating the images into distinct color channels. The anaglyph glasses provided to you allow you to view such images, although the images directly out of your camera may not work because the color channels will probably have too large a spatial disparity. The filters in our glasses seem to correspond to red and cyan (blue and green) which is probably the most common configuration. You can test your glasses on the Wikipedia images linked to above.Because a pinhole camera is light starved, your best bet is to capture outdoor images in bright sunlight. The scenes need to be nearly static because you'll be using very long exposure times. Because your stereo baseline will probably be small (half an inch to a few inches), you'll only get significant stereo disparity for nearby scene elements. Thus you'll want to test your stereo pinhole camera on outdoor scenes with nearby objects.

Standard Rectified Geometry

From this point on you should work individuallyTo make the rest of our stereo pipeline as easy as possible, we want our pair of stereo images to be in Standard Rectified Geometry. For an explanation of Standard Rectified Geometry and an overview of stereo setups read the intro to Chapter 11 and 11.1 in Szeliski. You can think of your anaglyph image as being the result of two cameras -- one whose optical center is at the red-filtered pinhole, and the other whose optical center is at the cyan-filtered pinhole. To be in Standard Rectified Geometry, a pair of cameras must be pointing the exact same direction, rotated to have the same up vector, and scaled to have the same resolution. If these conditions are met, points at infinite distance will be at the same (x,y) coordinates and corresponding points will have disparity only in the x direction (i.e. the epipolar lines are scanlines).

Luckily we've constructed our stereo pinhole camera such that it is almost in Standard Rectified Geometry. The only wrinkle is that our two virtual cameras are sharing an image plane (the screen) instead of having their own. Thus points that are infinitely far away will not have the same coordinate, and will instead have a disparity equal to your baseline. In the simplest case, where the camera is not angled with respect to the screen, a translation should suffice to put the two virtual cameras in to Standard Rectified Geometry. The transformation may be more complex otherwise -- try a homography (see imtransform in Matlab). Either way, the transformation will be specific to your camera setup and you will need to write the code to handle this. The stencil code provides an empty function rectify_stereo_pair.m where you should put your code. You'll want to crop away the parts of the left and right images which don't overlap. You might also want to reduce the resolution of your images since they are likely blurry anyway.

Disparity Estimation

The heart of a stereo algorithm is to automatically establish a dense correspondence between points in the left and right images such that a dense disparity image can be computed. This disparity image tells us, for every pixel in the left image, how many pixels to the left the corresponding pixel is in the right image. For distant points this disparity will be zero. For nearby points the disparity can be large. The disparity should never be negative. Instead of automatically computing this dense disparity image with a stereo algorithm, the starter code contains a GUI for you to manually specify corresponding points in the left and right images. You'll want to specify correspondences only at distinct or corner-like image regions where you can be sure that the correspondence is nearly pixel perfect. The starter code will refine these correspondences with a local search (you can try disabling this), and then interpolate the sparse disparity values implied by these correspondences to create a dense disparity image. There are two interpolation methods provided -- one based on Poisson fill, and one based on triangulation. You might experiment with both, they each have drawbacks.Depth Estimation

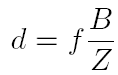

Now that we have a disparity image, computing depth at every pixel in the left image is straightforward. We simply use equation 11.1 in Szeliski

Where

- d = disparity

- f = focal length

- B = baseline

- Z = depth

Given depth values for each pixel, the starter code will use the Matlab function surf() to create a crude 3d rendering of your scene.

Extra Credit

While we have (at the moment) only one concrete suggestion for extra credit, as with previous projects we will consider any interesting extra work for extra credit.- up to 10 pts: Instead of manually performing correspondence between the stereo pairs, use an interest point detector or corner detector to find reliable points in one image, and then search for them in the other image as constrained by epipolar geometry.

Write up

For this project, just like all other projects, you must do a project report in HTML. In the report you will show and describe your pinhole camera and any interesting decisions you made during construction or image capture time. Show results for at least 5 different scenes. There are many possible way to visualize your results -- anaglyph images (perhaps with the color channels shifted such that the disparity is easier for humans to view), flickering animated gifs (the starter code will generate these for you), or 3d surfaces (the starter code will generate these, although it won't save images). Keep in mind that the 3d reconstructions will be very crude unless you do a great job building the camera, photographing a scene, aligning your images, and providing many point correspondences.Remember, even though you can work in groups to build the camera and capture images, all the coding and write-up must be solely your work.

Graduate Credit

There are no additional requirements for graduate credit for this project. But you don't want the undergraduate students to have cooler results, do you?Handing in

This is very important as you will lose points if you do not follow instructions. Every time after the first that you do not follow instructions, you will lose 5 points. The folder you hand in must contain the following:

- README - text file containing anything about the project that you want to tell the TAs

- code/ - directory containing all your code for this assignment

- html/ - directory containing all your html report for this assignment (including images)

- html/index.html - home page for your results

Then run: cs129_handin proj4

If it is not in your path, you can run it directly: /course/cs129/bin/cs129_handin proj4

Rubric

- +40 pts: Constructing a pinhole camera which captures anaglyph stereo pairs

- +20 pts: Putting images into Standard Rectified Geometry and computing depth based on the measured geometry of your camera and equation 11.1

- +20 pts: Using at least 5 images of scenes you have taken

- +20 pts: Write up

- +15 pts: Extra credit (up to fifteen points)

Final Advice

- In addition to using your stereo pinhole camera you can try capturing stereo images more conventionally -- by directly imaging a scene from two different locations with your digital camera. This has the significant advantages of shorter exposure time, higher resolution, higher contrast, etc... with the disadvantage that it is less straightforward to put the two images in to Standard Rectified Geometry and to know the values of the constants in equation 11.1 (stereo baseline, pixels per meter, and even focal length). However, even with incorrect values, your depth estimation might be correct up to some scaling factor, so the visualizations will still look good.

Credits

Project inspired by Antonio Torralba and this guide. Project description and code by James Hays.